Decentralised AI

AI´s problems have a solution - Web3

A $7.5 Billion AI token merger of SingularityNET, Fetch.ai and Ocean Protocol.

Stability AI CEO resigns to join decentralised AI.

Tether USDT moves towards decentralised AI.

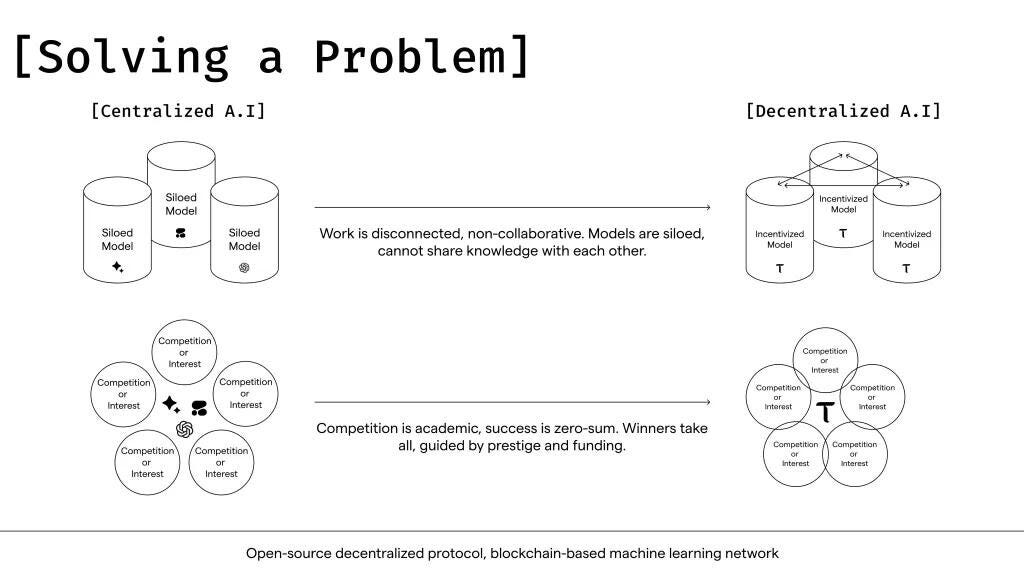

The overall pace of AI developments in large deeply pocketed organisations, rush to control, regulate, hire, compete with the FOMO of AI race, is pushing AI out of centralised structures.

So what do AI models and AI agents need? Trust, verification, less unfair/opaque control - exactly what blockchains and decentralisation offer.

'You cannot beat centralised AI with more centralised AI.’

Let´s explore why is this important and how does it work?

WHY? Because this tech has enhanced data privacy and cost savings.

Decentralised AI refers to a type of technology architecture for AI that uses blockchain for storage, processing and distribution of data across a network of nodes. Users can train models without having to share their data or lose control of it.

By leveraging blockchain and cryptographic economic incentives, decentralized AI encourages global participants to contribute computing power and data. The pricing is set by market dynamics of demand and supply, services to various models, domains, hosting of infrastructure, contributions, etc in a secured and verified manner. These economic incentives support the operations and in doing so you are awarded more incentives for the contributions. There are thousands participating in this manner and millions of rewards given. This is a different Capex Opex model than the usual ones with credits, subscriptions, etc leading to cost savings.

Decentralisation utilises network of nodes instead of a single powerful server. This allows for training AI models collaboratively and more effectively, utilising variety of datasets securely. It mitigates the risk of large-scale privacy breaches by minimising concentration of sensitive information in single location and avoids single point failure and unauthorised access. Overall, this architecture-service-access model improves scalability, enhanced collaboration, parallel processing, security and privacy protection.

WHAT?

Refreshing AI, ML and GenAI: Artificial intelligence is as old as time for millennials and we learnt it in almost every hypecycle from Big Data to ML and so on. The overall branch, relies on machines to learn and execute tasks without explicit directions on what to output, thereby imitating human behaviour. Machine Learning algorithms, a subset of AI, train on input data and the trained model makes predictions. Deep Learning models, a subset of ML, use layers of algorithms in the form of artificial neural networks to return results of more complex use cases.

A subset of deep learning is Generative AI. Generative AI models can product new content based on what is described in the input. Large Language Model (LLM) is a form of Generative AI.

In a nutshell, AI is advanced analytics at scale, which requires compute, storage and distribution network for access. AI business models take data about people, train the data and sell the use of that data. Data monetisation in this form is at the cost of data privacy, loss of control, sensitive information leaks, aggregated power, owner or creator of data does not get paid in any form usually, and so on.

Decentralisation: Decentralisation lets owner of data (people, entities, companies, or even programmable things) control the data, protect it, choose who to share with, at what price, how, how long, earn passive income from it, etc. This involves blockchain for verification, decentralised compute, network and storage across apps, access, infra layers following the tenets of technology architecture. Web3 is simply owning something (data, image, song, solar assets, etc) on the Internet in a decentralised and verifiable manner.

Decentralised AI is not new.

Open Mined, a 6+ years old non-profit, has been running on Deep Learning, Federated Learning, Homomorphic Encryption, and Smart Contracts.

+ Federated Learning is centralised model and decentralised training model. Once models are trained independently on nodes (Google´s 2017 federated learning on mobile devices as nodes), updated model weights are sent back to central server. Research papers in 2021-2023 cite decentralised learning has significant savings in communication resources as compared to federated learning.

+ Homomorphic Encryption, which both Microsoft and Cisco have been exploring since 2015-16, is computing on encrypted data, saving the process of decryption, compute, encryption. Microsoft SEAL, is open source homomorphic encryption, MIT licensed, was released in 2018. (Comparing Zero Knowledge Proofs of blockchain for authentication and Fully Homomorphic Encryption for privacy in computation are a topic for another day.)

HOW? How does it all work?

Decentralised AI projects are on different platforms and forms.

It is not made of lists of prioritized technical and functional requirements basis use cases gathered from business and IT, with SLAs from procurement and support, or sizing projections basis current and expected usage estimated internally.

Want to be ready for high volume sale discount days? Want 10x scalability on your enterprise architecture? Want to be able to choose various AI / ML models for different sub usecases? Yes, but do not want to keep all and buy all that infrastructure? Well, you can lease compute securely on a marketplace.

SingularityNET is an open-source decentralized AI marketplace platform where anyone can access, contribute to, and monetize AI services. The platform utilizes blockchain to provide secure, transparent, and fair services. Developers publish their services and are able to charge for the use of their services using the native AGIX token. These tokens can be staked to earn passive income. The ecosystem has DeFi, Robotics, Biotech and Longevity, Gaming and Media, Entertainment and Art along with AI. Users can pay in fiat currency using PayPal as well.

Bittensor turns Machine Learning into a tradable commodity on a peer to peer intelligence market. It uses Proof of Intelligence to reward nodes for adding useful machine-learning models and results. If a node's machine learning work is accurate and valuable, it has a better chance of being selected to add a new block to the chain and earn TAO tokens as a reward. Its collusion resistant mechanism rewards honestly selected weights whereas the dishonest ones decay into irrelevance. Bittensor has an ambitious detailed think through which cannot be covered in short! It compares itself to Bitcoin for AI ecosystem with supply (miners - AI layer and validators - blockchain layer) and demand (applications and users). It has subnets concept in the network dynamics, where each subnet offers different rewards for different AI applications and also allows for off-chain ML of large datasets, promoting new models and new ideas than just one model. Bittensor also employs Mixture of Experts(MoE) model by leveraging multiple specialised models working in collaboration for more comprehensive outcomes outperforming single model methods.

Gensyn network is a hyper scalable Machine Learning Compute Protocol. It offers a cluster of global computing resources that are accessible to everyone, at any time. The goal is to make AI model training possible on any device around the world by connecting many different computing devices, from idle data centers to personal laptops with GPUs. It introduces a new system called "probabilistic proof-of-learning," which uses data from gradient-based optimization, a key method in machine learning. This technique provides a scalable and reliable way to verify work without the need for replication, making machine learning tasks more efficient.

Akash.network has come a long way from Huawei dePIN research papers of 2022 explaining cloud computing on open cloud resources with community driven ecosystem to NVIDIA H100, A100 rental pricing and quantitative cost benefits over cloud deployments. Akash has resulted in compute savings up to 70% cheaper than AWS or Google Cloud with comparable hardware (high-density P100s and RTX 3090s).

Freshly baked: ThumperAI, a Generative AI startup trained a foundation model on Akash Network and here are the goals, decisions & tradeoffs, architecture design, and code for distributed training across 48 GPUs across two providers with outcomes and learnings.

Ritual is a decentralized AI computing platform with a focus on creating an incentivized network connecting distributed computing devices. It emphasizes five key areas: powering hosting, sharing, inference, and fine-tuning of AI models, along with an API layer for model access, a proof layer ensuring computational integrity, censorship resistance, and privacy.

Forbes Digital Assets has posted about this trend a month ago which should get CXOs curious if not already. OORT which operates the Proof of Honesty (PoH) protocol to contribute towards a globally optimal goal and verify decentralized resources. For example, it incentivizes the service providers to cache frequently accessed dataset pieces in proximity and to deploy in a zone with high and reliable bandwidth. As a result, all service providers are independently working towards a globally optimal goal; thus, PoH optimizes the network topology and the resource allocation in a decentralised manner.

Ocean Protocol is a decentralised data governance protocol. It has datatokens to enable token-gated control access, data wallets, data DAO and Compute-to-Data to buy and sell data privately. Thus, enabling data monetisation (with ownership) by building AI dApps, Token-gated REST API or even Data Market with projects such as Acentrik - Mercedes Benz, Gaia-X - Future Mobility Data Marketplace via deltaDAO and so on.

Fetch.ai, the third partner in the AI token merger, aims to provide a permissionless network where anyone can connect and access datasets using autonomous AI. Autonomous agents incentivised with payment for tasks performed and learning can perform various tasks.

There are many more startups, young platforms like 0xGPU, etc. Decentralized AI will create the next wave of Silicon Valley unicorns - March 2024 piece from crypto.news

PS: Why do organisations still work on technology in a centralised manner if decentralisation is better?

Inertia: Centralised data storage, data monetisation, one view, one system, central repository, one tech solution vendor from T1-L1 procurement - It has been decades of how our technology has been built, what we have told our customers, what our largest customers have approved with various committees and bought with large sums of money. Centralised systems with hierarchical order of decisions are still the bread and butter of large organisations. Hence, it is not a surprise when one says decentralised it puts off those who seek comfort of the known, tried and tested projects; or if Web3 brings knee-jerk reactions of business case and if still blockchain makes the naysayers just ignore the topic as long as mandated targets are met.

Cultural mindset: So how long are there going to be patch-work and upgrades on an old architecture - one option could be ‘Look, the real world still uses mainframes’ and the other option could be ‘What if we can build new business and service models with new technology’? The choice to be skeptically early in technology has always paid off. To choose a better Capex-Opex model is after all a human decision based on culture, bias, ambition, inertia, directional leadership, etc., no matter how much technical and factual evidence there maybe.

Decentralisation is a cultural mindset. It is a change in hierarchy and ownership.

Media sways: Hoping future news delves deeper and does some multidisciplinary journalistic research on how should various types of mindsets to realign their perspectives, deeper look at combination of technologies, nature of incentives, importance of private key management, rather than only prices, purchases, hikes, falls, bans, pitting against one another, witchhunt of technologies and all that.

Are large centralised companies bad and decentralised ones good? No.

Anything that is successful but eventually becomes opaque or unfair will make way for the next better options. Technology and society are cyclic in the larger entropy.

Various blockchain projects, Web3 products, blockchain-cloud projects, etc use mid-way solutions to optimise the core proposition or to match enterprise grade quality in essential parameters.

About a year ago, in early 2023, AI´s strong growth caught everyone´s attention, including the Web3 and blockchain world for combinatorial capabilities. I posted about Web3 x AI noting how largest companies were already thinking/working on these lines. Some naysayers ignored it, a tech innovator wrote to me calling it a commodity post, a cloud veteran debunked ‘I do not believe in Web3’ and in general various conversations scoffed at these ideas as a bear market sector looking for the AI savior for funding. Well, even Jeff Bezos had to explain ‘What is the Internet?’ in the 90s, so new technology is not going to be easier despite all the technology we have.

And, just maybe, AI´s problems getting solved in decentralised architectures will get people’s attention back to where it should be - storage, compute and network.

References:

SingularityNet, Fetch.AI, Ocean Protocol merger will drive decentralized AI development, Source: CoinTelegraph and others. March 2024

Decentralized Federated Learning: A Survey and Perspective arXiv:2306.01603

A comparison study of centralized and decentralized federated learning approaches utilizing the transformer architecture for estimating remaining useful life, Reliability Engineering & System Safety, Volume 233, 2023 https://www.sciencedirect.com/science/article/abs/pii/S0951832023000455

Introducing Open Mined: Decentralised AI, Awa Sun Yin, Aug 2017

BittenSor: Rise of decentralised AI by Greyhorn Asset Management, Feb 2024

Decentralized AI On Blockchain Rivals OpenAI's Lead: Forbes Digital Assets, Feb 2024